|

8/24/2023 0 Comments Elasticsearch filebeat docker

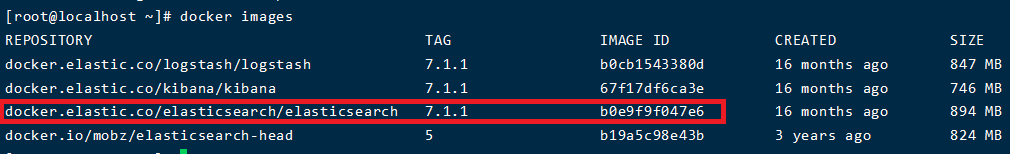

The image also persists /var/lib/elasticsearch - the directory that Elasticsearch stores its data in - as a volume. It has rich running options (so you can use tags to combine different versions), great documentation, and it is fully up to date with the latest versions of Elasticsearch, Logstash, and Kibana.īefore installing, make sure that the following ports are free: 5601 (for Kibana), 9200 (for Elasticsearch), and 5044 (for Logstash).Īlso, make sure that the vm_max_map_count kernel setting is set to at least 262144: sudo sysctl -w vm.max_map_count=262144īy default, all three of the ELK services (Elasticsearch, Logstash, Kibana) started. The ELK Stack Docker image that I recommend using is this one. There is still much debate on whether deploying ELK on Docker is a viable solution for production environments (resource consumption and networking are the main concerns) but it is definitely a cost-efficient method when setting up in development. You can install the stack locally or on a remote machine - or set up the different components using Docker. There are various ways of integrating ELK with your Docker environment. So, how does one go about setting up this pipeline? Installing the ELK Stack But the diagram above depicts the basic Docker-to-ELK pipeline and is a good place to start out your experimentation. Or, you could add an additional layer comprised of a Kafka or Redis container to act as a buffer between Logstash and Elasticsearch. For example, you could use a different log shipper, such as Fluentd or Filebeat, to send the Docker logs to Elasticsearch. Of course, this pipeline has countless variations.

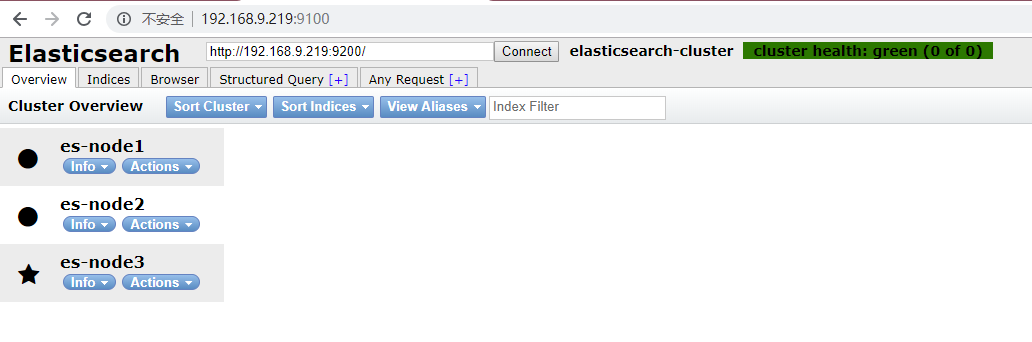

Logstash forwards the logs to Elasticsearch for indexing, and Kibana analyzes and visualizes the data.

Logs are pulled from the various Docker containers and hosts by Logstash, the stack’s workhorse that applies filters to parse the logs better. Understanding the PipelineĪ typical ELK pipeline in a Dockerized environment looks as follows: If you don’t want to manage ELK on your own, check out Logz.io Log Management.Īlas, this article is about setting up ELK for Docker, so let’s get started. To get around this, Logz.io manages and enhances OpenSearch and OpenSearch Dashboards at any scale – providing a zero-maintenance logging experience with added features like alerting, anomaly detection, and RBAC. Second, while getting started with ELK is relatively easy, it can be difficult to manage at scale as your cloud workloads and log data volumes grow – plus your logs will be siloed from your metric and trace data. To replace the ELK Stack as a de facto open source logging tool, AWS launched OpenSearch and OpenSearch Dashboards as a replacement. First, while the ELK Stack leveraged the open source community to grow into the most popular centralized logging platform in the world, Elastic decided to close source Elasticsearch and Kibana in early 2021. A few things to note about ELKīefore we get started, it’s important to note two things about the ELK Stack today. The next part will focus on analysis and visualization. This first part will explain the basic steps of installing the different components of the stack and establishing pipelines of logs from your containers. We will be writing a series of articles describing how to get started with logging a Dockerized environment with ELK. While it is not always easy and straightforward to set up an ELK pipeline (the difficulty is determined by your environment specifications), the end result can look like this Kibana monitoring dashboard for Docker logs: The ELK Stack (Elasticsearch, Logstash and Kibana) is one way to overcome some, if not all, of these hurdles. Transiency, distribution, isolation - all of the prime reasons that we opt to use containers for running our applications are also the causes of huge headaches when attempting to build an effective centralized logging solution. "curl -s -I | grep -q 'HTTP/1.The irony one faces when trying to log Docker containers is that the very same reason we chose to use them in our architecture in the first place is also the biggest challenge. ELASTICSEARCH_SSL_CERTIFICATEAUTHORITIES=config/certs/ca/ca.crt

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed